Abstract

Derivational robustness may increase the degree to which various pieces of evidence indirectly confirm a robust result. There are two ways in which this increase may come about. First, if one can show that a result is robust, and that the various individual models used to derive it also have other confirmed results, these other results may indirectly confirm the robust result. Confirmation derives from the fact that data not known to bear on a result are shown to be relevant when it is shown to be robust. Second, robustness may increase the degree to which the robust result is indirectly confirmed if it increases the weight with which existing evidence indirectly confirms it. This may happen when it strengthens the connection between the core and the robust result by showing that auxiliaries are not responsible for the result.

Similar content being viewed by others

Notes

See e.g., the special issue (2010, vol. 41) on climate change in Studies in History and Philosophy of Modern Physics. Räisänen (2007) provides a non-technical introduction by a climatologist.

I discuss the context dependence of confirmation via robustness further in Lehtinen (2016). I show, for example, that robustness may entirely fail to confirm even when there is indirect empirical evidence, but also that it is possible that a given initially non-confirming demonstration of robustness may become confirmatory later if the epistemic situation is modified in the right way.

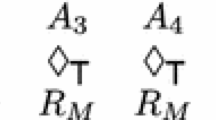

The ‘┬’ and ‘├’ signs refer to the entailment relation, and the vertical line ‘|’ to a direct model fit.

I do not intend to argue for HD as opposed to other accounts of confirmation by applying Gemes’ account, and neither did Gemes by presenting it (see e.g., Gemes 1993).

See Hartmann and Fitelson (2015) for an account in which old evidence confirms even in cases weaker than entailment..

See Hands (2016) for a study of robustness with virtually no empirical evidence.

‘Future temperature increase’ and ‘increase in greenhouse gases’ may refer to various things but the details are not needed in this paper. There are different scenarios of future CO2 emissions and various ways to conceptualise future temperature increases. Equilibrium Climate Sensitivity (ECS) determines the long-term equilibrium warming response to stable atmospheric composition, but does not account for vegetation or ice-sheet changes. Transient Climate Response (TCR) is a measure of the magnitude of transient warming while the climate system, particularly the deep ocean, is not in equilibrium; and Transient Climate Response to Cumulative CO2 emissions (TCRE) is a measure of the transient warming response to a given mass of CO2 injected into the atmosphere, and combines information on both the carbon cycle and climate response. TCR is estimated with high confidence to be likely between 1 and 2.5 °C and extremely unlikely to be greater than 3 °C (Bindoff and Stott 2013, pp. 6, 59–60).

Climate modellers appear to think that RM is robust, however: 'Models are unanimous in their prediction of substantial climate warming under greenhouse gas increases, and this warming is of a magnitude consistent with independent estimates derived from other sources, such as from observed climate changes and past climate reconstructions' (Randall et al. 2007, p. 601).

The history of climate science involves adding various elements to a model that becomes larger and larger (see Edwards 2010 for an extensive history of climate science). For example, the coupling of models of the sea and the climate was a major break-through. Modules for vegetation and sea ice, for example, were then added. One could thus also interpret (6) as the result of successive models. If M1 had been the first model in time, M2 the second, and so on, reality would have been described by M3⊢ R1, R2, R3 and M2⊢ R1, R2 but M2⊬, and M1⊢ R1 but M1⊬R2, R3.

The Navier–Stokes equations belong to what modellers often refer to as the ‘physical core’ of climate models. In this paper, however, the ‘core’ merely refers to CO2 forcing. Katzav (2013) argues that these equations cannot be confirmed because we know them to be true already (see Yablo 2014, p. 101 for a more general claim to this effect).

See Houkes and Vaesen (2012), Odenbaugh (2011), Odenbaugh and Alexandrova (2011) and Woodward (2006). See Katzav (2013, 2014) for a version of this criticism that is specifically targeted at climate models. Kuorikoski et al. (2012) provide a rejoinder to Odenbaugh and Alexandrova's version of this argument.

As I have shown (see fn. 9), there are slightly different estimates of the sensibility of the climate to different forms of forcing..

See e.g., Bindoff and Stott (2013, FAQ 10.1). The report uses these terms to discuss the possibility that another alternative account might explain the observed global warming, the idea that internal variability alone is sufficient: ‘…we conclude that it is virtually certain that internal variability alone cannot account for the observed global warming since 1951’ (p. 22). See Parker (2010a) for a philosophical analysis of fingerprint results from attribution studies that derive results like (5″).

Despite such results, the attribution to greenhouse gases is not perfect because climate-simulation models have hundreds of thousands of lines of computer code, and some parts of it have remained the same for decades. Insofar as all the code is not checked, it is still possible in principle that errors in it could generate the confirmed results.

They thus argue that variety of evidence allocates the confirmation to C.

References

Achinstein, P. (2001). The book of evidence. New York; Oxford: Oxford University Press.

Bangu, S. (2006). Underdetermination and the argument from indirect confirmation. Ratio: An International Journal of Analytic Philosophy, 19, 269–277.

Bindoff, N. L., & Stott, P. A. (2013). Detection and attribution of climate change: from global to regional. In J. Bartholy, R. Vautard, & T. Yasunari (Eds.), Climate change 2013: The physical science basis. Working group I contribution to the IPCC fifth assessment report (AR5) (pp. 1–132). Cambridge: Cambridge University Press.

Earman, J. (1992). Bayes or bust? A critical examination of Bayesian confirmation theory. Cambridge, MA: MIT Press.

Edwards, P. N. (2010). A vast machine: computer models, climate data, and the politics of global warming. Cambridge, MA: MIT Press.

Fitelson, B. (2001). A Bayesian account of independent evidence with applications. Philosophy of Science, 68, 123–140.

Forber, P. (2010). Confirmation and explaining how possible. Studies in History and Philosophy of Science Part C: Studies in History and Philosophy of Biological and Biomedical Sciences, 41, 32–40.

Friedman, M. (1953). The methodology of positive economics. In Essays in positive economics (pp. 3–43). Chicago: University of Chicago Press.

Garber, D. (1983). Old evidence and logical omniscience in Bayesian confirmation theory. In J. Earman (Ed.), Testing scientific theories (pp. 99–132). Minnesota: Minnesota University Press.

Gemes, K. (1993). Hypothetico-deductivism, content, and the natural axiomatization of theories. Philosophy of Science, 60, 477–487.

Gemes, K. (1994). A new theory of content I: Basic content. Journal of Philosophical Logic, 23, 595–620.

Gemes, K. (2005). Hypothetico-deductivism: Incomplete but not hopeless. Erkenntnis, 63, 139–147.

Gemes, K. (2007). Carnap-confirmation, content-cutting & real confirmation manuscript. Oxford: Birkbeck College.

Glymour, C. N. (1980). Theory and evidence. Princeton: Princeton University Press.

Glymour, C. N. (1983). Discussion: Hypothetico-deductivism is hopeless. Philosophy of Science, 72, 322–325.

Gramelsberger, G. (2010). Conceiving processes in atmospheric models—general equations, subscale parameterizations, and ‘superparameterizations’. Studies in History and Philosophy of Science Part B: Studies in History and Philosophy of Modern Physics, 41, 233–241.

Guillemot, H. (2010). Connections between simulations and observation in climate computer modeling. Scientist’s practices and “bottom-up epistemology” lessons. Studies in History and Philosophy of Science Part B: Studies in History and Philosophy of Modern Physics, 41, 242–252.

Hacking, I. (1967). Slightly more realistic personal probability. Philosophy of Science, 34, 311–325.

Hands, D. W. (2016). Derivational robustness, credible substitute systems and mathematical economic models: The case of stability analysis in Walrasian general equilibrium theory. European Journal for Philosophy of Science, 6, 31–53.

Hartmann, S., & Fitelson, B. (2015). A new Garber-style solution to the problem of old evidence. Philosophy of Science, 82, 712–717.

Hempel, C. G. (1945). Studies in the logic of confirmation (II.). Mind, LIV, 97–121.

Hempel, C. G. (1965). Aspects of scientific explanation, and other essays in the philosophy of science. New York: Free Press.

Houkes, V., & Vaesen, K. (2012). Robust!—Handle with care. Philosophy of Science, 79, 345–364.

Howson, C. (1991). The ‘old evidence’ problem. The British Journal for the Philosophy of Science, 42, 547–555.

Jeffrey, R. C. (1983). Bayesianism with a human face. In J. Earman (Ed.), Testing scientific theories (pp. 133–156). Minneapolis: University of Minnesota Press.

Justus, J. (2012). The elusive basis of inferential robustness. Philosophy of Science, 79, 795–807.

Katzav, J. (2013). Hybrid models, climate models, and inference to the best explanation. The British Journal for the Philosophy of Science, 64, 107–129.

Katzav, J. (2014). The epistemology of climate models and some of its implications for climate science and the philosophy of science. Studies in History & Philosophy of Modern Physics, 46, 228–238.

Knutti, R., Furrer, R., Tebaldi, C., Cermak, J., & Meehl, G. A. (2010). Challenges in combining projections from multiple climate models. Journal of Climate, 23, 2739–2758.

Knuuttila, T., & Loettgers, A. (2011). Causal isolation robustness analysis: the combinatorial strategy of circadian clock research. Biology and Philosophy, 26, 773–791.

Kuorikoski, J., & Lehtinen, A. (2009). Incredible worlds, credible results. Erkenntnis, 70, 119–131.

Kuorikoski, J., Lehtinen, A., & Marchionni, C. (2010). Economic modelling as robustness analysis. British Journal for the Philosophy of Science, 61, 541–567.

Kuorikoski, J., Lehtinen, A., & Marchionni, C. (2012). Robustness analysis disclaimer: Please read the manual before use! Biology and Philosophy, 27, 891–902.

Kuorikoski, J., & Marchionni, C. (2016). Evidential diversity and the triangulation of phenomena. Philosophy of Science, 83, 227–247.

Laudan, L. (1996). Beyond positivism and relativism: Theory, method, and evidence. Boulder Colorado: Westview.

Laudan, L., & Leplin, J. (1991). Empirical equivalence and underdetermination. The Journal of Philosophy, 88, 449–472.

Lehtinen, A. (2016). Allocating confirmation with derivational robustness. Philosophical Studies, 173, 2487–2509.

Levins, R. (1966). The strategy of model building in population biology. American Scientist, 54, 421–431.

Levins, R. (1993). A response to Orzack and Sober: Formal analysis and the fluidity of science. Quarterly Review of Biology, 68, 547–555.

Lisciandra, C. (2017). Robustness analysis and tractability in modeling. European Journal for Philosophy of Science, 7, 79–95.

Lloyd, E. A. (2009). Varieties of support and confirmation of climate models. Proceedings of the Aristotelian Society, LXXXIII, 213–232.

Lloyd, E. A. (2010). Confirmation and robustness of climate models. Philosophy of Science, 77, 971–984.

Lloyd, E. A. (2012). The role of complex empiricism in the debates about satellite data and climate models. Studies in History and Philosophy of Science, 43, 390–401.

Lloyd, E. A. (2015). Model robustness as a confirmatory virtue: The case of climate science. Studies in History and Philosophy of Science Part A, 49, 58–68.

Machlup, F. (1955). The problem of verification in economics. Southern Economic Journal, 22, 1–21.

Machlup, F. (1956). Rejoinder to a reluctant ultra-empiricist. Southern Economic Journal, 22, 483–493.

Nagel, E. (1961a). The structure of science: Problems in the logic of scientific explanation. London: Routledge & Kegan Paul.

Nagel, E. (1961b). The structure of science: Problems in logic of scientific explanation. New York: Hartcourt, Brace & World.

Niiniluoto, I. (1983). Novel facts and Bayesianism. The British Journal for the Philosophy of Science, 34, 375–379.

Niiniluoto, I., & Tuomela, R. (1973). Theoretical concepts and hypothetico-inductive inference. Dordrecht: D. Reidel.

Odenbaugh, J. (2011). True lies: Realism, robustness, and models. Philosophy of Science, 78, 1177–1188.

Odenbaugh, J., & Alexandrova, A. (2011). Buyer beware: Robustness analyses in economics and biology. Biology and Philosophy, 26, 757–771.

Okasha, S. (1997). Laudan and Leplin on empirical equivalence. The British Journal for the Philosophy of Science, 48, 251–256.

Orzack, S. H., & Sober, E. (1993). A critical assessment of Levins’s the strategy of model building in population biology (1966). Quarterly Review of Biology, 68, 533–546.

Parker, W. S. (2006). Understanding pluralism in climate modeling. Foundations of Science, 11, 349–368.

Parker, W. S. (2009). Confirmation and adequacy-for-purpose in climate modelling. Proceedings of the Aristotelian Society, LXXXIII, 233–249.

Parker, W. S. (2010a). Comparative process tracing and climate change fingerprints. Philosophy of Science, 77, 1083–1095.

Parker, W. S. (2010b). Predicting weather and climate: Uncertainty, ensembles and probability. Studies in History and Philosophy of Modern Physics, 41, 263–272.

Parker, W. S. (2010c). Whose probabilities? Predicting climate change with ensembles of models. Philosophy of Science, 77, 985–997.

Parker, W. S. (2011). When climate models agree: The significance of robust model predictions. Philosophy of Science, 78, 579–600.

Parker, W. S. (2013). Ensemble modeling, uncertainty and robust predictions. Wiley Interdisciplinary Reviews: Climate Change, 4, 213–223.

Pirtle, Z., Meyer, R., & Hamilton, A. (2010). What does it mean when climate models agree? A case for assessing independence among general circulation models. Environmental Science and Policy, 13, 351–361.

Raerinne, J. (2013). Robustness and sensitivity of biological models. Philosophical Studies, 166, 285–303.

Räisänen, J. (2007). How reliable are climate models? Tellus A, 59, 2–29.

Randall, D. A., et al. (2007). Climate models and their evaluation. In S. Solomon et al. (Eds.), Climate change 2007: The physical science basis (pp. 589–662). Cambridge and New York: Cambridge University Press.

Schupbach, J. N. (2016). Robustness analysis as explanatory reasoning. The British Journal for the Philosophy of Science. doi:10.1093/bjps/axw008.

Schurz, G. (1991). Relevant deduction. Erkenntnis, 35, 391–437.

Schurz, G. (1994). Relevant deduction and hypothetico-deductivism: A reply to Gemes. Erkenntnis, 41, 183–188.

Schurz, G. (2014a). Bayesian pseudo-confirmation, use-novelty, and genuine confirmation. Studies in History and Philosophy of Science, 45, 87–96.

Schurz, G. (2014b). Philosophy of science: A unified approach. New York: Routledge.

Sprenger, J. (2015). A novel solution to the problem of old evidence. Philosophy of Science, 82, 383–401.

Staley, K. W. (2004). Robust evidence and secure evidence claims. Philosophy of Science, 71, 467–488.

Steele, K., & Werndl, C. (2013). Climate models, calibration and confirmation. British Journal for the Philosophy of Science, 64, 609–635.

Stegenga, J. (2012). Rerum concordia discors: Robustness and discordant multimodal evidence. In L. Soler, E. Trizio, T. Nickles, & W. C. Wimsatt (Eds.), Characterizing the robustness of science (pp. 207–226). London: Springer.

Suárez, M. (2004). An inferential conception of scientific representation. Philosophy of Science, 71, 767–779.

Tebaldi, C., & Knutti, R. (2007). The use of the multi-model ensemble in probabilistic climate projections. Philosophical Transactions of the Royal Society A, 365, 2053–2075.

Votsis, I. (2014). Objectivity in confirmation: Post hoc monsters and novel predictions. Studies in History and Philosophy of Science, 45, 70–78.

Weisberg, M. (2006). Robustness analysis. Philosophy of Science, 73, 730–742.

Weisberg, M. (2013). Simulation and similarity: Using models to understand the world. Oxford: Oxford University Press.

Weisberg, M., & Reisman, K. (2008). The robust Volterra principle. Philosophy of Science, 75, 106–131.

Wimsatt, W. C. (1981). Robustness, reliability and overdetermination. In M. B. Brewer & B. E. Collins (Eds.), Scientific inquiry and the social sciences (pp. 124–163). San Francisco: Jossey-Bass.

Woodward, J. (2006). Some varieties of robustness. Journal of Economic Methodology, 13, 219–240.

Yablo, S. (2014). Aboutness. Princeton: Princeton University Press.

Ylikoski, P., & Aydinonat, N. E. (2014). Understanding with theoretical models. Journal of Economic Methodology, 21, 19–36.

Acknowledgements

For their comments on earlier drafts of this paper and discussions about these issues, I would like to thank Till Grüne-Yanoff, Jaakko Kuorikoski, Chiara Lisciandra, Caterina Marchionni, Ilkka Niiniluoto, Jani Raerinne, Jouni Räisänen, Jonah Schupbach, Jacob Stegenga, the anonymous reviewers, and participants in the following conference series: PSA, Models and Simulations, INEM, NNPS, and a workshop on Robustness in Helsinki 2014. Given the complexity of the issues, the present account may still suffer from various weaknesses for which they could not be held responsible.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Lehtinen, A. Derivational Robustness and Indirect Confirmation. Erkenn 83, 539–576 (2018). https://doi.org/10.1007/s10670-017-9902-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10670-017-9902-6