- New

-

Topics

- All Categories

- Metaphysics and Epistemology

- Value Theory

- Science, Logic, and Mathematics

- Science, Logic, and Mathematics

- Logic and Philosophy of Logic

- Philosophy of Biology

- Philosophy of Cognitive Science

- Philosophy of Computing and Information

- Philosophy of Mathematics

- Philosophy of Physical Science

- Philosophy of Social Science

- Philosophy of Probability

- General Philosophy of Science

- Philosophy of Science, Misc

- History of Western Philosophy

- Philosophical Traditions

- Philosophy, Misc

- Other Academic Areas

- Journals

- Submit material

- More

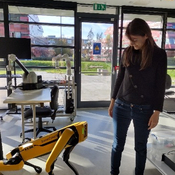

Sven Nyholm, Humans and Robots; Ethics, Agency and Anthropomorphism

Journal of Moral Philosophy 19 (2):221-224 (2022)

Abstract

How should human beings and robots interact with one another? Nyholm’s answer to this question is given below in the form of a conditional: If a robot looks or behaves like an animal or a human being then we should treat them with a degree of moral consideration (p. 201). Although this is not a novel claim in the literature on ai ethics, what is new is the reason Nyholm gives to support this claim; we should treat robots that look like human or non-human animals with a certain degree of moral restraint out of respect for human beings or other beings with moral status. Although Danaher or Coeckelbergh also claim that we should treat robots with a degree of moral consideration, the reasons they give for making this claim focus on duties or rights attaching to the robot themselves (see J. Danaher, “Welcoming Robots into the Moral Circle: A Defence of Ethical Behaviourism,” Science and Engineering Ethics, (2019): 1–27 or M. Coeckelbergh, “Moral Appearances: Emotions, Robots and Human Morality,” Ethics and Information Technology, 12(3) (2010): 235–241.). Nyholm disagrees with this type of reasoning and claims that until robots develop a human or animal like inner life, we have no direct duties to the robots themselves. Rather, it is out of respect for human beings or other beings with moral status that we should treat some robots with moral restraint. Gerdes, similarly inspired by Kant, focuses on the human agent to argue that we should avoid treating robots in cruel ways because this may corrupt the human agent’s character (see A. Gerdes, “The Issue of Moral Consideration in Robot Ethics,” siggas Computers and Society, 45(3) (2015): 274–279.). Nyholm’s contribution here is to extend this view such that the corruption or harm being done is against the humanity in all of us.Author's Profile

My notes

Similar books and articles

Sven Nyholm: Humans and Robots: Ethics, Agency, and Anthropomorphism. [REVIEW]Martin Sand - 2020 - Ethical Theory and Moral Practice 23 (2):487-489.

Humans and Robots: Ethics, Agency, and Anthropomorphism.Sven Nyholm - 2020 - Rowman & Littlefield International.

From Sex Robots to Love Robots: Is Mutual Love with a Robot Possible?Sven Nyholm & Lily Frank - 2017 - In John Danaher & Neil McArthur (eds.), Robot Sex: Social and Ethical Implications. Cambridge, MA: MIT Press. pp. 219-244.

The Retribution-Gap and Responsibility-Loci Related to Robots and Automated Technologies: A Reply to Nyholm.Roos de Jong - 2020 - Science and Engineering Ethics 26 (2):727-735.

Moral difference between humans and robots: paternalism and human-relative reason.Tsung-Hsing Ho - 2022 - AI and Society 37 (4):1533-1543.

Attributing Agency to Automated Systems: Reflections on Human–Robot Collaborations and Responsibility-Loci.Sven Nyholm - 2018 - Science and Engineering Ethics 24 (4):1201-1219.

Other Minds, Other Intelligences: The Problem of Attributing Agency to Machines.Sven Nyholm - 2019 - Cambridge Quarterly of Healthcare Ethics 28 (4):592-598.

Anthropomorphism in Human–Robot Co-evolution.Luisa Damiano & Paul Dumouchel - 2018 - Frontiers in Psychology 9:468.

Limiting the discourse of computer and robot anthropomorphism in a research group.Matthew J. Cousineau - 2019 - AI and Society 34 (4):877-888.

Robot sex and consent: Is consent to sex between a robot and a human conceivable, possible, and desirable?Lily Frank & Sven Nyholm - 2017 - Artificial Intelligence and Law 25 (3):305-323.

Why robots should not be treated like animals.Deborah G. Johnson & Mario Verdicchio - 2018 - Ethics and Information Technology 20 (4):291-301.

Robots as Malevolent Moral Agents: Harmful Behavior Results in Dehumanization, Not Anthropomorphism.Aleksandra Swiderska & Dennis Küster - 2020 - Cognitive Science 44 (7):e12872.

The Technological Future of Love.Sven Nyholm, John Danaher & Brian D. Earp - 2022 - In André Grahle, Natasha McKeever & Joe Saunders (eds.), Philosophy of Love in the Past, Present, and Future. Routledge. pp. 224-239.

Analytics

Added to PP

2022-05-02

Downloads

40 (#389,966)

6 months

9 (#295,075)

2022-05-02

Downloads

40 (#389,966)

6 months

9 (#295,075)

Historical graph of downloads