Abstract

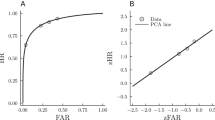

The variance of the logarithm of the likelihood ratio, a commonly used measure of bias in signal detection theory, is derived for the case of an unbiased observer and two underlying normal distributions of equal variance. This variance increases with d’ (the subject’s sensitivity to the two stimuli involved in the discrimination). Accordingly, increased deviation of the In (LR) sample mean from a true mean of zero can be anticipated as signal level increases even when bias and sensitivity are essentially independent.

Article PDF

Similar content being viewed by others

Reference

Finney, D. (1971). Probit analysis. Cambridge: Cambridge University Press.

Green, D. M., & Swets, J. (1974). Signal detection theory and psychophysics (rev. ed.). Huntington, NY: Krieger.

Author information

Authors and Affiliations

Additional information

This report was supported by Grant R01 EY02951 from the National Eye Institute and by an award from the Research Committee of the C. W. Post Center.

Rights and permissions

About this article

Cite this article

Matin, E., Valle, V. Variance of the likelihood ratio measure of bias. Bull. Psychon. Soc. 22, 248–249 (1984). https://doi.org/10.3758/BF03333821

Received:

Published:

Issue Date:

DOI: https://doi.org/10.3758/BF03333821