Abstract

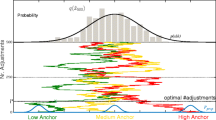

It is widely thought that in fallible reasoning potential error necessarily increases with every additional step, whether inferences or premises, in the same way that the probability of a lengthening conjunction shrinks. However, this has the absurd consequence that consulting an expert, proof-checking, filling gaps in proofs, and gathering more evidence for a given conclusion necessarily make us worse off, since they also add more steps. I will argue that the self-help steps listed here are of a distinctive type, involving composition rather than conjunction. Error grows differently over composition than over conjunction, I argue, and this dissolves the apparent paradox.

Similar content being viewed by others

Notes

I will often use the shorter phrase “reliable belief” to mean reliably acquired belief, where the upshot is that the belief is likely to be true because of its provenance.

It is important for what comes below to note that this argument that the CT is false does not show that justifiedness always decreases when we interpose sources, even fallible ones. This is the easiest type of counterexample to see, but other types are discussed below.

Here and in what follows we will appear to be counting steps, e.g., claims, inferences, checks, in order to assess how much potential error is generated. One might wonder what individuates the steps. What if a person has a belief that it is a gray house? Does this count as one claim—that it is a gray house—or two—that it is a house and that it is a gray house? The answer is straightforward. It is one or two depending on whether it contributes as two beliefs or one in the actual inferences, beliefs, and checking procedures of the subject in the argument in question. How we count thus depends on how one defines what it is for a belief that a person possesses to be a basis for inference, checking, or other beliefs, but we do not need to take a stand on that question here.

In this sense the problem is very different from the preface paradox in which the conclusion whose reliability or probability we are interested in is itself a conjunction of the premises. My discussions in this paper provide nothing helpful to those difficulties since the conjunction rule is the correct one for that question.

If z is Pr(R), y is Pr(p/R), and x represents Pr(p/-R), then Pr(p) > Pr(R), i.e., Prj(q) > Pri(q), iff y + (x/z)(1 - z) > 1. Note that what is on the left hand side of the last inequality is not a probability; the formula is a relation between probabilities.

The problem is that any inference from a claim to a claim that it logically implies must be given probability 1 or rational confidence 1, on pain of incoherence, simply because it is in fact an implication. In cases of logical and other necessary truths probability does not track epistemic legitimacy but the relations the propositions stand in as a matter of fact. Because of this, the fallibility of logical inference cannot even be represented on normal axiomatizations. However, there are re-axiomatizations that would allow the points here to be made for demonstrative proof because they allow logical propositions to be given non-extreme probabilities (see Hacking 1967; Garber 1983. Garber’s view has the advantage of preserving coherence.

If the argument has more than one premise, we imagine all of them loaded into A in the first line. The total probability model can be generalized to multiple premises, but it will have more terms because we are splitting the conjunction of all the premises into a list of conjuncts.

The argument here works for defeasible arguments, and would need to be redrawn for demonstrative proofs in a probabilistic framework that could given non-extreme probabilities to necessary truths (see footnote 7).

This can be derived beginning with total probability on N1 and on N2 (via B)—which yields that if Pr(B) > 0 then Pr(N1)/Pr(N2) < Pr(N1/-B)/Pr(N2/-B). This combined with Bayes’ theorem applied to Pr(-B/N1) and Pr(-B/N2) gives that Pr(-B/N1) > Pr(-B/N2). .

References

Detlefsen, Michael, & Luker, M. (1980). The four-color theorem and mathematical proof. The Journal of Philosophy, LXXVII, 803–820.

Garber, D. (1983). Old evidence and logical omniscience in bayesian confirmation theory. In J. Earman (Ed.), Testing scientific theories (Vol. X, pp. 99–131). Minneapolis: Minnesota Studies in the Philosophy of Science.

Goldman, A. (2008). What is Justified Belief? In Epistemology: An anthology, 2nd edn. (pp. 333–347. Malden: Blackwell Publishing.

Hacking, I. (1967). Slightly more realistic personal probability. Philosophy of Science, 34(4), 311–325.

Jeshion, R. (1998). Proof checking and knowledge by intellection. Philosophical Studies, 92, 85–112.

Kitcher, P. (1984). The nature of mathematical knowledge. Oxford: Oxford University Press.

Resnik, M. D. (1989). Computation and mathematical empiricism. Philosophical Topics, XVII, 129–143.

Vogel, J. (2006). Externalism resisted. Philosophical Studies, 131, 729–742.

Acknowledgments

This study was supported in large part by NSF grant SES-0823418.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Roush, S. Justification and the growth of error. Philos Stud 165, 527–551 (2013). https://doi.org/10.1007/s11098-012-9967-7

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11098-012-9967-7